After leaving Liverpool and moving to Antibes I’ve been keeping myself occupied, while I search for what to do next, by catching up on the latest biofeedback applications and trying them out. This week I’ve been going through the app’s on the NeuroSky store.

On the whole, I’m not impressed, pickings are slim at best, and those apps that are there feel incomplete. Either app developers are using alternative stores to sell their wares, or development with the NeuroSky headset is being focused elsewhere, e.g. research or as a hobby, rather than commercial. The best experience I’ve had so far with the NeuroSky is playing the Star Wars Force Trainer which I bought when I first started working in Liverpool. Its a well designed product and very easy for new players to brainwave control to pick up and have a go. It always goes down well at training events I’ve run!

Anyways, one of things that has bugged me over the years is the attention and meditation metrics NeuroSky provides. According to the manual the attention value is supposed to reflect:-

the intensity of a user’s level of mental “focus” or “attention”, such as that which occurs during intense concentration and directed (but stable) mental activity

from Mindset Communications Protocol document

.. and the meditation value is supposed to reflect: –

Meditation meter of the user, which indicates

the level of a user’s mental “calmness” or “relaxation”. [..]Note that Meditation is a measure of a person’s mental levels, not physical levels,

from Mindset Communications Protocol document

From the apps I’ve played around with these two measures tend to be used exclusively. The problem I have with these metrics lies in their propriety nature; nobody knows what either of these signals actually are in terms of an EEG signal (e.g. the meditation metric I would guess is based on alpha wave activity). Subsequently when your trying to manipulate a mechanic built upon these metrics (e.g. in Neuronauts, speed is controlled by attention), if something goes wrong you can’t exactly consult the research literature to debug it because only NeuroSky knows what the measure represents (see PhysiologicalComputing.net for more on this).

So today I decided to perform some basic mental inductions to see what affect they would have on the attention and meditation metrics collected by the NeuroSky headset. I was particularly interested if their any significant differences between the inductions I was going to use which would at least indicate their metrics can be manipulated and consistently so.

Using psychopy I mocked up a little experiment. Wearing a NeuroSky MindBand I sat down in front of a screen which would display an induction (e.g. relax) to be undertaken for a period of 10 seconds. At the end their would be a short beep to indicate to stop the induction A 5 second gap was setup between each induction and each induction would be repeated 10 times in a random order. The average attention and meditation scores over the induction period would be collected.

I used the following three inductions for this task: –

- do nothing: eyes open staring into space

- focus: I would focus on a fixed point in my environment

- relax: I would close my eyes

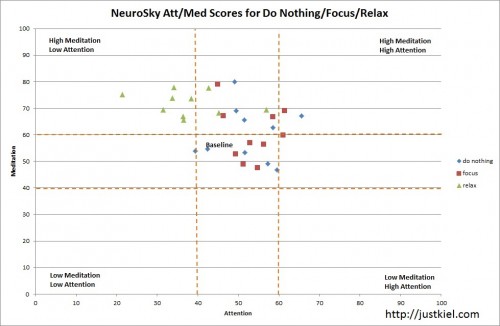

In theory there should be a clear delineation between the scores for each induction. Below is an X-Y plot of the scores I collected.

Before discussing the graph, lets quickly go over what the NeuroSky scores actually mean. According to the documentation, each metric provides a relative measure of state. While the metric reports from 0 to 100, these are not absolute values. Instead this range is split into 5 zones representing, a baseline (values 40-60), slightly elevated, elevated, slightly reduced and reduced. For example, a meditation value of 50 would be considered a baseline value and neither indicates a heightened or lowered meditative state. In the graph above I’ve highlighted the baseline zone as readings here are of little interest (i.e. think of it as a dead zone whereby reading don’t really mean anything).

Now going back to the graph, the first thing I would worry about as a developer, is the lack of a separation between the focus and idle induction I used. I would of hoped to see the focus readings collating in the bottom right hand corner of the graph but no such luck. Their registering in the attention baseline zone. The relaxation induction collates as expected in the top left of the chart (high meditation, low attention), but I would of been really surprised if that didn’t have an effect as the meditation metric screams alpha waves which are easily induced by closing the eyes (which also incidentally minimizes signal noise from eye blinks and forehead muscle movement) .

I can think of several ways of measuring attention, subsequently figuring out what went wrong, if anything, with the focus induction is problematic (to get a sense of the various ways to measure attention check out this paper testing engagement metrics). The choice in induction might not be suitable for the attention metric or their could be other mitigating factors but their really isn’t a way to check without understanding the underlying signal its based on.

While these measures are propriety they do offer developers without experience in these signals an easy way of conceptualising brainwave activity and thereby a means to develop their own brain controlled applications. However I would advise to approach these measures with a degree of skepticism, don’t take them at face value. Instead consider the type of induction you wish the user to control your app. Then validate whether or not that induction changes the measure both discernibly and consistently. If not, select another induction or develop mechanics using the raw & frequency transform information the headset provides as these values you can debug.

The problem I find with a lot of the NeuroSky apps I’ve used so far is their tendency to assume user’s are already as skilled at manipulating their brainwaves as they are their keyboard and mouse. For a new type of controller requiring a wholly new type of skill this is an unrealistic expectation on part of app designers and needs to be readdressed. At least when motion games were introduced, while the controller was new, the skills required to play used your existing set. When was the last time you had to actively control your brainwave frequencies in order to do something?

Bonus

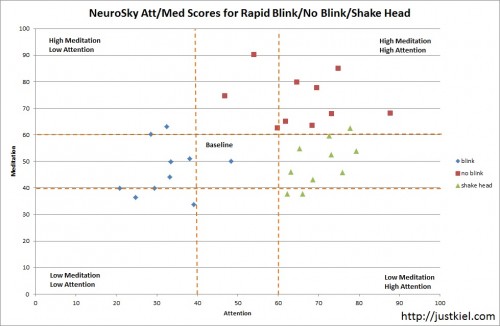

I ran a second experiment using inductions that I knew would generate signal noise: –

- rapid blink: continuous rapid eye blinking

- no blink: no eye blinking

- shake head: continuous rapid head shaking

You can see there is a clear delineation between all 3 inductions. If you ever wanted to cheat at a NeuroSky game you could use these inductions to artificially induce the desired states seen above. Not I would condone cheating (pay no attention to my old website motto).

hi,

i am currently doing a project using neurosky mindwave and i would like to know how to get the charts you obtain (showing the attention and meditation levels)

Not exactly sure what you mean, please could you clarify. If you mean how did I make the charts, I made them myself in Excel.

[…] [1] Kiel Gilleade. Manipulating the attention and meditation metrics. http://justkiel.com/wordpress/?p=345 […]

[…] lowered)。Kiel Gilleade 在他的 blog 中指出這個兩個神秘的值其實在統計辨識上並沒有顯著差異。而Steve […]